Speaking the Same Language About Research

Curt Arledge, Former Director of UX Strategy

Article Categories:

Posted on

What exactly are we talking about when we talk about research?

I recently wrote about how we’re taking steps to do more research at Viget. Part of that effort is educating teammates on high-level, pragmatic topics like how to talk about the benefits of research and respond to client objections. Ultimately, we want to have an effect on company processes and culture, not just to have siloed conversations about theory and the minutia of individual methods.

With that said, one requirement for building a shared culture is that everyone is speaking the same language. What exactly are we talking about when we talk about research?

I’d like to answer this question from two complementary perspectives:

- What words should we use to talk about research?

- When do we use different types of research?

A research vocabulary

In my experience, one of the biggest impediments to talking with colleagues about research is the lack of a shared vocabulary. For one thing, there are lots of words that mean almost-but-subtly-not the same thing (see UX research, user research, user testing, usability testing). It doesn’t help that some terms originate in academia and then take on lives of their own in practice. Worse still, there are just lots of terms and methods in the space of design research.

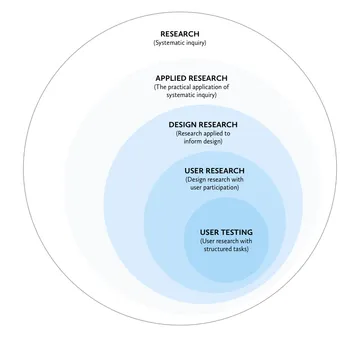

The hierarchical taxonomy of terms below (synthesized from some other people’s ideas and models) is my attempt to simplify things.

Research

Research is systematic inquiry – asking questions in some structured way. It can be either pure (done for the sake of knowledge, i.e. science) or applied. Except for the occasional independent study, all the research we do at Viget is applied research.

Applied research

Applied research answers questions in the service of a product, business, client, etc. For Viget, research is applied to informing design, but also to higher-level questions about business strategy, such as market research. Applied research includes exploring new opportunities, monitoring conversation and sentiment on social media, and tracking business metrics.

Design research

Design research is research applied to informing the work of design. Some design research methods involve the direct participation of users (i.e. user research), some involve observing user behavior without their knowing participation (e.g. A/B testing), and others rely purely on expert experience (e.g. expert review, including the more specific heuristic evaluation). Design research also involves getting to know a particular domain with methods like stakeholders interviews and competitive analysis. As I’ve defined it, design research is essentially synonymous with “UX research.”

User research

User research is design research with the participation of representative users. Researchers recruit and interact with people, in person or remotely, in real time or asynchronously. Some user research takes the form of structured testing, but it also includes interviews, direct observation, surveys, and other methods like participatory design.

A rule of thumb for whether or not something is user research is if there are “participants.” By this logic, I don’t consider A/B testing to be user research, even though we get data from real users. Including methods where users aren’t aware they’re being observed dilutes the meaning of user research, which should imply making the effort to make some type of contact with people.

User testing

User testing is a type of user research that includes structured tasks. It’s important to note that user testing is not about testing users – it’s about getting real users to test a product, UI, navigation structure, etc.

Some people object to the term “user testing” for this reason – “usability testing” is a more academically correct term – but I find user testing to be a more inclusive term for highly abstracted tests like card sorting. By my definition, a rule of thumb for whether something is user testing is if there are “tasks” that participants perform.

When should we research?

The above model is useful for speaking the same language about research, but it doesn’t help us at all in deciding which methods to use for a given problem.

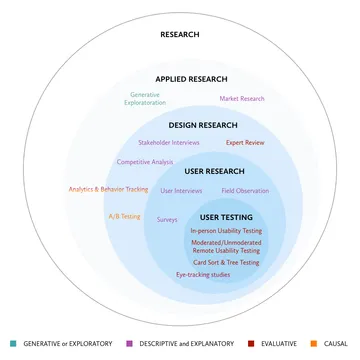

Research is fundamentally about asking questions to avoid making risky assumptions. Asking the right questions at the right time helps us guard against designing the wrong thing and designing it the wrong way. In her very good book Just Enough Research, Erika Hall categorizes research types by the types of questions we need answered in the course of our work:

Generative or Exploratory Research

“What’s up with...?” Generative or exploratory research helps identify problems worth solving. Methods include reviewing existing literature and interviewing and observing people to find unmet needs.

Descriptive and Explanatory Research

“What and how?” Descriptive and explanatory research helps us learn more about a problem so we know how to solve it. Methods include reviewing existing literature and competitors, interviewing stakeholders, and user research.

Evaluative Research

“Are we getting close?” Evaluative research is all about testing our solutions with representative users to validate our designs. Methods can include expert review, but an appropriate form of user testing is usually preferable.

Causal Research

“Why is this happening?” After launch, causal research helps us understand how people are using the thing we built and how we can optimize it. Methods include analytics and A/B testing.

One thing I like about Hall's taxonomy is that it suggests that research is something that happens at every stage of the project cycle and across a number of roles. Research isn’t “owned” by a small group of expert researchers. In Viget terms, research activities may be led by the Strategy, UX, or Data & Analytics teams, but we all share responsibility for asking the right questions to guard against making bad assumptions.

Below is my “unified model” that ties together a range of research types and methods with their purposes in answering different kinds of questions.

In the words of mathematician George E.P. Box, “All models are wrong, but some are useful.” I hope this model of research types, methods, and purposes is useful in clearing up what we’re talking about when we talk about research, or at least useful in provoking further discussion and debate.