How to Create Fast, Searchable Notes for Real-Time Research

Katherine Olvera, Former Senior User Experience Designer

Article Categories:

Posted on

A way to quickly glean insights from your ongoing user testing.

Recently, we’ve been working on a fast-paced product redesign. To ensure that we’re making the right design decisions along the way, we’ve integrated user testing over the length of the project. Each week, we run five 30-minute sessions where we interview a participant and show them designs that we have been developing. We ask them to perform tasks and observe them as they interact with the prototypes.

We quickly realized that it would be challenging to record and analyze our sessions, as our notes would grow unwieldy over the course of the project. To keep our research relevant, we needed to develop a solid system to both track and make sense of our observations during testing.

Challenges

Running ongoing user testing parallel to design presents a few challenges:

In order for notes to be useable to the design team, those notes must be searchable. All information gathered during the length of your sessions should be easily and equally accessible.

In order to affect the design in a fast-paced project, the information gathered during a week of testing needs to be processed and analyzed in real time.

Prototypes change from week to week as we hone designs and flesh out more interactions. Correspondingly, the questions and tasks that we are presenting to participants aren’t consistent from week to week. The system needs to be flexible and link all related notes together.

Taking into account the above considerations, we came up with a new system for taking notes. Once we got it up and running, recording and analyzing testing sessions each week was a breeze. We were able to use one week of testing to inform our design revisions and get the refined designs in front of users the next week to test how they worked. Each subsequent week of testing afforded us the opportunity to make more nuanced observations and discoveries about our users and our proposed solutions. Here are the steps to implementing this system for your team:

Our System

- Set up a team huddle. For a large research effort, there are likely multiple people involved from recruiting participants and preparing prototypes to moderating and recording the sessions. Before beginning each week of sessions, the team should gather to go over the script and prototypes. Outline the questions you hope to answer through your testing sessions, as well as the specific tasks you will be asking participants to perform.

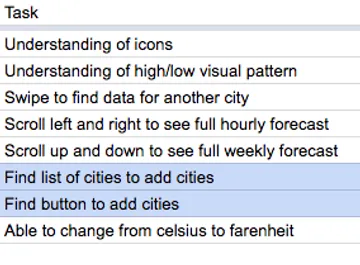

- Define the tasks. This will help your note-takers better evaluate the performance of the task during the session.

- Make sure any note-takers know what a successful completion of the task looks like, as well as any acceptable variations.

- Tasks should be measurable, which may require breaking down complicated interactions into microtasks. For example, imagine you were testing an interaction on Facebook. You may ask a participant to change their profile photo. However, in your notes, you might split that task into these two steps: navigate to profile page and upload photo from computer. This will make it easier to quickly evaluate each step during the session. It also enables you to later parse out where problems lie with more precision.

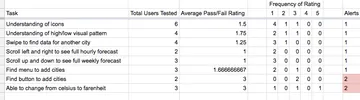

- Define the metrics. Create a rating scale and write corresponding descriptions to evaluate each task consistently. Share this with the team. We used a scale with 1 equating to a pass and 5 equating to a fail. For example, 1 indicated a direct and easy completion of the task, 2 indicated a hesitation before completion, 3 indicated a course-correction, and so forth. This will help you with analysis later.

- Eliminate the need to transfer and analyze notes by writing them directly in a spreadsheet. We recorded each task, our rating of the participant’s performance of the task, as well as any relevant observations on a new line in a spreadsheet. This set up a straightforward structure for our notes that could be processed later.

- Store pre-test interview information in a separate spreadsheet tab. While interview information may be helpful and interesting as a cross-reference, keep in mind that your goal is to test the prototype, not the user. We kept all the participant information, like their role in a company or how frequently they used this type of product, in a secondary tab. We could always refer to it later to understand outliers or trends, but for the most part, we were more interested in how the participants on the whole interacted with various prototypes.

- Create a tab to analyze your notes. Once you have a few sessions documented, you can start analyzing how your participants on average perform individual tasks. (Make sure that you are using consistent names to tag each task - you can use validation and drop-downs to assist you in this.) We used functions to average the ratings for each task, and we added conditional formatting rules to alert us to any task that met certain criteria, such as a high frequency of failures or high average fail rate.

- After discovering which tasks participants struggle with, go back and read your notes to understand why. This is where you will be glad that you organized notes by tasks. Now you can filter your notes to only show notes for a specific task and read the observations relevant to that task for all the participants you have observed. Was there a pervasive problem? Did users miss a clickable button? Was the copy unclear? Did a field not look editable? You'll be able to tell rather quickly why users are struggling.

This process of note-taking created a feedback loop for us. Each week, it allowed us to validate certain aspects of the design, while also alerting us to the specific parts of the design that weren’t functioning. That way, we could focus our energy on the aspects of the design that warranted the most attention. Although this research process might seem time-intensive, it should save you time in the long run.

Check out the demo sheet we created, or download a blank sheet to start recording your notes.