Benchmarking Javascript Templating Libraries

Brian Landau, Former Developer

Article Category:

Posted on

Because of Connect-a-Sketch’s heavy use of Javascript, I’m always looking for new ways to improve JS performance. This is, of course, important for ensuring a good user experience on as many machines as possible, not just those with newer computers and browsers. But, it’s also important for our ability to add new features as new features often mean more javascript running on the page.

One part of that Javascript functionality is generating the HTML for page nodes (representations of pages on the canvas). I use one of the many javascript templating libraries to do this currently. I recently noticed that mustache.js had been released and I got interested in exploring what other options are out there and how well they perform.

Methodology

I gathered up a huge list (the only requirement being that it be independent of other libraries or be a jQuery plugin) and narrowed it down to ones with template and API syntax I liked and code that looked at the very least decent on a quick glance. Following that, I created a series of benchmark pages that I would use to test the speed of each library. I generally had two tests, a simple test of a basic HTML template, and a test where I wanted to iterate over some data. Some of the libraries had support for iterating within the template syntax and others that didn’t; for those that didn’t I iterated via a standard for loop appending the content to the end of the relevant HTML element. A couple of the libraries (underscore and Tempest) offer the ability to compile the template into a function, for these I also created a third test for that functionality.

The libraries I ended up testing were:

Following that, I created a jQuery benchmark function based on PPK’s benchmarking methodology. I would load the page and then call the benchmark function, passing in the benchmark test function, from Safari’s Javascript console. Each test function was benchmarked over 1000 iterations, and each benchmark was run 5 times. The results from the 5 runs were averaged and used to produce the results below.

Results

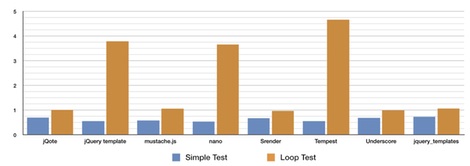

Generally speaking, the standard test performed slightly more quickly than the loop test, the exception being those libraries that didn’t have iterating functionality built-in, in which case the loop test took significantly longer. Overall this within-library difference is what we’d expect. Overall though, all of the libraries actually performed relatively the same.

Assuming you want to be able to handle iterations within the template the fastest library was mustache.js, followed by Srender and underscore. Although the three libraries that didn’t handle iterations performed badly on the loop test, they actually performed best on the simple test with the nano library performing best overall.

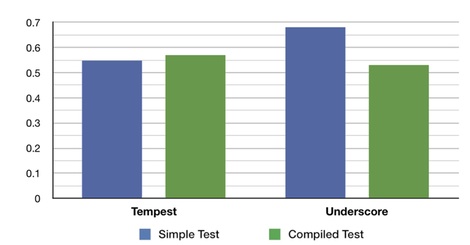

When comparing the simple test to the compiled test it seems compiling doesn’t offer much of performance benefit, although it seemed to offer more benefit for the underscore library then for Tempest.

Take your pick

In the end it seems like they were all reasonable choices, leading me to believe most of the other options out there probably generally perform about equally. Which you choose to go with probably depends on whether or not what you’re templating will be iterating over a set of data or not. If it is, you probably would want to go with mustache.js; if not probably nano.

Resources

I’ve put up a repository containing the libraries and HTML benchmark pages, as well as a gist of the benchmarking function.

I’ve also put the original data and summary data into a gist.

Update:

The author of the Tempest library, Nick Fitzgerald, contacted me to let me know that Tempest does not turn templates into pre-compiled functions. However, it does cache the template string. To everyone, sorry about that mixup.